Eleanor Dare

Visiting Lecturer, Royal College of Art, London

Email: eleanor.dare@rca.ac.uk

Web: https://www.rca.ac.uk/more/staff/dr-eleanor-dare/

Reference this essay: Dare, Eleanor. “Rear Psycho: Hitchcock’s horror language mediated by AI.” In Language Games, edited by Lanfranco Aceti, Sheena Calvert, and Hannah Lammin. Cambridge, MA: LEA / MIT Press, 2021.

Published Online: March 15, 2021

Published in Print: To Be Announced

ISBN: To Be Announced

ISSN: 1071-4391

Repository: To Be Announced

Abstract

To create a strong AI (one which replicates human intelligence) would necessitate an understanding of the relationship between language and thought; but human languages encompass more than written and spoken communication, they are also manifest in images, sounds, artefacts, places and practices. The complexity of language is therefore immense, perhaps intractable, leaving us with the question: how can computational natural language understanding (NLU) and computer vision hope to untangle the complex situated and cultural encoding of human language? Understanding the historical trajectory of AI, while also evolving a methodology and related methods to understand the ontological status of artificial language understanding, is the key theme of this essay. By cutting Hitchcock’s films Psycho and Rear Windowinto small ‘language games,’ the case is presented that tensions between Turing and Wittgenstein’s understanding of language are still manifest in contemporary algorithmic processes and rhetoric. Using image auto-tagging, verbal summaries, scene prediction and style transfer algorithms, practical and theoretical experiments address the limits and potential of symbolic representation and machine learning to understand the language and thoughts of human and other animals. The essay evidences what happens in specific cases when machine-driven effort is engaged to translate embodied and cultural meanings embedded in film, a ground truthing method articulated here as a form of language game.

Keywords: AI, logic, Wittgenstein, natural language understanding, Hitchcock

Introduction and Background to Symbolic Logic and Artificial Languages

In 2018 the magazine, Dazed, ran an article entitled “Meet Norman: the ‘psychopath’ AI trained on violent Reddit content.” [1] The article described MIT’s image captioning algorithm, anthropomorphized to the title of ‘Norman,’ which was trained on a corpus of violent film imagery. The sensationalism of the article missed the most salient point of the mission to develop such an algorithm: the quest for an AI which can understand human language. Such a project is essentially the quest to discover what Wittgenstein characterized as ‘the hidden essence’ of thought; [2] the goal is dependent upon the implication that language is the same as thought, an Aristotelian premise which was repudiated by Wittgenstein in his later works. To investigate the premise that Wittgenstein’s two most famous texts Tractatus Logico-Philosophicus [3] and Philosophical Investigations [4] can still tell provide insights into the limits of Natural Language Understanding, two well-known films, Psycho [5] and Rear Window [6] are used here to clarify the relationship of language and knowledge to recent manifestations of artificial intelligence.

In the Tractatus Logico-Philosophicus, Wittgenstein stated:

Most of the propositions and questions to be found in philosophical works are not false but nonsensical. Consequently, we cannot give any answer to questions of this kind but can only point out that they are nonsensical. Most of the propositions and questions of philosophers arise from our failure to understand the logic of our language. […] All philosophy is a ‘critique of language.’ [7]

At the time of writing the Tractatus, Wittgenstein was committed to the idea that symbolic logic could atomize and make clear the nature of our thinking; he stated: ‘Philosophy aims at the logical clarification of thoughts.’ [8] In the Tractatus, Wittgenstein presents a ‘sign-language’ or system of logical propositions which he hoped would erase the errors and ambiguities of human language. This is the epistemic tradition from which Good Old-Fashioned Artificial Intelligence (referred to as GOFAI) evolved. GOFAI operated from a paradigm of intelligence as the manipulation of logical symbols. However, GOFAI failed to deliver the hyperbolic promises its proponents assured, leading to the so-called AI Winter of the late 1980s and early 1990s, a period in which research funding for Symbolic AI was largely withdrawn.

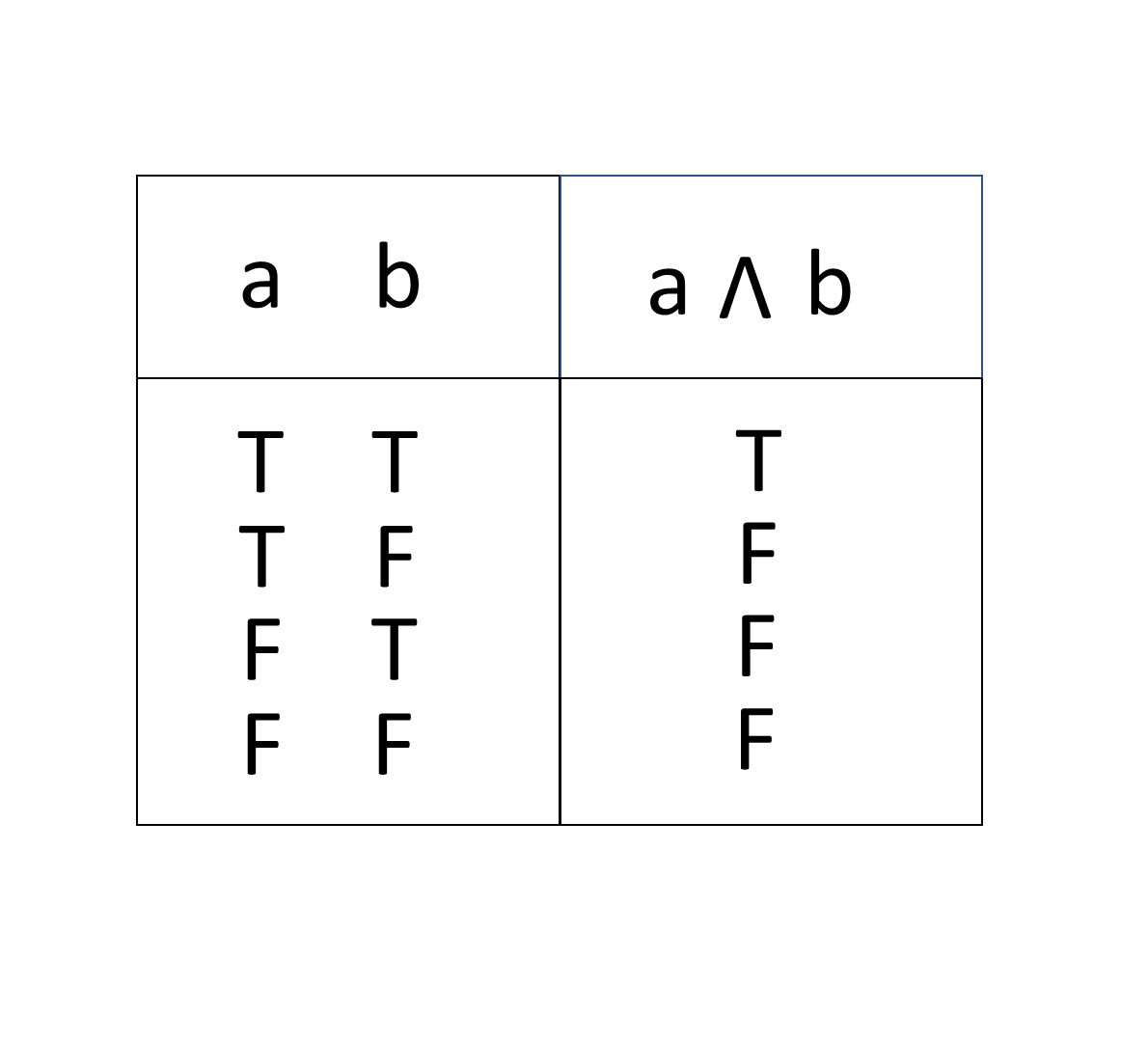

The failure of symbolic manipulation to generate a strong general artificial intelligence relates to the symbol grounding problem, meaning the question of how we connect our symbols for things to the things themselves. For humans the symbol grounding problem is solved by our sensory, embodied relationship to the world, our non-separation from it, and our ability to act in the world. Through his work on the Tractatus, Wittgenstein is often cited as the inventor of truth tables (though others, such as Emil Leon Post and Charles Sanders Peirce developed similar representations of functional values). Truth tables systematically establish the validity of functional arguments for propositional logic (propositional calculus). These are binary constructs which are either true or false. In digital electronics and computing, truth tables reduce Boolean logic to a visual representation of switching functions. For example, the truth table for the logical conjunction A AND B is represented below, where AND (&) is represented by the symbol ꓥ. True, or T, will only occur when both logical operands, A and B, are True:

How we get from Truth Tables to a belief in Whole Brain Emulation (WBE), or the “computer emulation of brain structures sufficient to functionally reproduce human cognition,” [9] is via a philosophical continuum which constitutes human intelligence as representable by symbolic logic. It has been said that: “Ironically, AI seemed to have adopted the conceptual framework of Wittgenstein’s Tractatus shortly after the realities of language use had driven Wittgenstein himself to abandon it.” [10]Wittgenstein’s texts still represent some of the most acute tensions inherent in any discussion about the limits of language to represent the problems of philosophy and wider questions of what constitutes knowledge. Strong artificial intelligence, the project of forming a computational model of human intelligence, is above all a philosophical undertaking; it has been described as a form of philosophical engineering: “Philosophy deals with concepts that are inherently tricky to define such as knowledge, meaning, reference, reasoning, and all of them are considered to be essential for intelligent behavior. This is why, in a broad sense, AI is the engineering of philosophical concepts.” [11]

Wittgenstein and Turing’s contributions to logic are also the foundation of modern electronics and computational logic. Without truth tables and logic gates there would be no AI, likewise the Turing Test represents “a defining inspiration in the early history of AI research. Even now, some researchers take passing the Turing Test as fundamental to the field of AI research,” [12] albeit Margaret Boden suggested, in 2006, that for “AI researchers, the question is no longer, ‘What should we do to pass the test?’ but, ‘Why can’t we pass it?’” [13]

Despite Wittgenstein’s initial ambitions to formalize language, the Tractatus and the Philosophical Investigations are contradictions. In the first book, Wittgenstein maintains a belief in the ability of logic to represent language clearly, and this implies, in an oblique way, that it can represent human thought. While in the Philosophical Investigations, Wittgenstein maintains that language derives its meaning from use, [14] in other words, from a non-cognitive foundation. This is a radical departure, not only from his own earlier assertions about the nature of language and logic, but from the historical lineage of logic going back to Aristotle. Its implications for AI, both symbolic and connectionist, are still significant. Neural networks are a rejection of symbol manipulation; they instead use a connectionist approach, modeling parallel networks of nodes which are in some ways like neural networks in the brain. In connectionist models, actions emerge, not from formal rules, but from networks of mathematical functions. However, despite the significant paradigm shift connectionism represents, the goal of language understanding is still, arguably, predicated on the idea of language as a purveyor of unsituated, transparent meanings, separable from action, contingence and culture.

In the Philosophical Investigations, Wittgenstein famously asserted: “If a lion could speak, we couldn’t understand him.” [15] Wittgenstein’s point is that the implicit articulation of knowledge and language is embodied and enacted, which is a direct challenge to GOFAI, and the belief in symbolic representation and logical symbol manipulation to represent and act (human) intelligently in the world. But it is also a challenge to non-symbolic AI, to machine learning and connectionism. Considering Wittgenstein’s later position, how can a computer system of any kind understand humans, our languages and thoughts, let alone emulate human intelligence? By the time he wrote the Blue and Brown Books Wittgenstein stated:

Philosophers very often talk about investigating, analyzing, the meaning of words. But let’s not forget that a word hasn’t got a meaning given to it, as it were, by a power independent of us, so that there could be a kind of scientific investigation into what the word really means. A word has the meaning someone has given to it. [16]

Ontologically, the status of computation itself is unclear, whether it is the enaction of material processes or non-physical abstractions constructed from mathematics and symbolic logic:

The exact nature of computer programs is difficult to determine. On the one hand, they are related to technological matters. On the other hand, they can hardly be compared to the usual type of inventions. They involve neither processes of a physical nature, nor physical products, but rather methods of organization and administration. They are thus reminiscent of literary works even though they are addressed to machines. [17]

McQuillan’s description of data science as “the operation of machinic metaphysics that travels like a resonant wave through the medium of our scientific culture” [18] is a reminder of AI’s genealogy. As in the wider field of data science, the formal imperatives of machine learning are neoplatonic and essentially aesthetic, predicated on an idealized, formal order: “Data science can be understood as an echo of the neo-platonism that informed early modern science in the work of Copernicus and Galileo. That is, it resonates with a belief in a hidden mathematical order that is ontologically superior to the one available to our everyday senses.” [19]

Again, it is important to acknowledge, as McQuillan does, the many meanings ascribed to such terms as data science, AI and intelligence. In order to understand better McQuillan’s critique of ‘data science’ as neoplatonic, it is useful to return to the ontological status of AI/machine Learning, to look at its historical trajectory, at the many overlaps between philosophy, mathematics and logic. The genealogy of computation has a direct line to Aristotle, [20] in the form of his deductive, syllogistic logic, or what his followers, the Peripatetics, called the Organon—tools for logical argument about the nature of the world and human thought. Aristotle’s syllogisms take the form of logical propositions such as the (updated), syllogism below:

All men are mortal

Norman Bates is a man

Norman Bates is mortal

These propositions are not contingent on observation, they are non-inductive, deductive forms, relying on ‘top-down’ reasoning. Contradictions and tautologies are useful in pushing the limits of such propositions. A contradiction is the opposite of a tautology. A tautology is always true, for example: “I know either Norman Bates is a murderer or Norman Bates is not a murderer.” This statement cannot be negated, while a proposition such as: “He is a murderer and not a murderer,” is a contradiction, with logically incompatible conclusions. A contradiction is always false, while a tautology is always true. For Wittgenstein, contradictions and tautologies are senseless but not nonsense, they are like language games: “they show what they say,” they are “part of the symbolism of arithmetic,” but they are “not pictures of reality.”[21] Contradictions and tautologies reveal the limits of both human and artificial languages.

Though a linear account of mathematical and logical developments is simplistic, in canonical accounts of Western logic and artificial languages it is Gottlob Frege’s Begriffsschrift of 1879, [22] which introduces modern logic in the form of propositional calculus—an extension of Leibniz’s laws, via the use of quantifiers (some, many, all) and connectives (such as AND ∧, OR ∨, NOT ¬ ) as well as Boolean algebra. [23] Russell and Whitehead’s predicate calculus then provided a system for representing the complex underlying logic of sentences, as outlined in Principia Mathematica, [24] providing the logical foundations and symbolic notation for GOFAI and wider computation.

The ontological status of language understanding since the evolution of GOFAI has been central to the author’s work over the last several years, with projects developing context sensitive chatbots, interactive storytelling mechanisms for ‘understanding’ human language, brain driven devices and other ‘interfaces’ testing the limits of language to represent thought. In that time, artificial intelligence has become more visibly embedded in everyday life, with systems such as PowerPoint’s automatic captioning service, computer vision (detection and correction processes) on many mobile phones, as well as facial recognition becoming a standard facet of social media platforms such as Facebook. By engaging critically with machine learning processes which are now easily accessible, the author presents an evaluation of current AI and language understanding as it exists in our everyday lives. Testing, or indeed, ‘ground truthing’ technologies against the claims made for them is here presented as a valid continuum of Wittgenstein’s language games.

Language Games as Method

The term ‘language joke’ is Wittgenstein’s articulation of the limits of language, and the idea of ‘language games’ is his way of alerting us to those limits. Since Wittgenstein’s texts were written, there have been significant shifts in both the politics of knowledge, as well as discourse addressing artificial intelligence, and its instrumental sub-set, machine learning. What, then, are the current limits of symbolic and non-symbolic AI to emulate human language intelligence? To address these issues, a series of language games were undertaken, using the example of Hitchcock’s two famous films, Psycho and Rear Window, to ground the question of what language and AI can represent within well-known cultural references—albeit those of Hollywood in the early fifties and sixties. Language games are Wittgenstein’s way of revealing the nature of language and the idea that like a game, language has rules, but also “family resemblances,” [25] for example the terms Olympic Games, ball games, card games, so that “games form a family,” [26] albeit often an unpredictable, culturally specific, dynamic family, a family which is relational and contingent. This is a significant shift from his thinking in the Tractatus.

Playing such language games is an attempt to create a method for investigating the ontological and ethical limits of natural language understanding (NLU) and computer vision, to extend Wittgenstein’s questions into a contemporary context. Both of the Hitchcock films used to support the methods outlined here were selected because they are accessible online, in the form of short clips, film-stills, as well essays analyzing the architecture, [27] sound, [28] psychology and cultural legacy, [29] avian symbolism [30] and sexual politics [31] of Hitchcock’s most famous works. The ability to parse (formally analyze and break down into constituent parts) language is still one of the grand challenges of artificial intelligence. In the summer of 2019, the author made a series of tests or language games, in an attempt to evaluate the current potential of publicly accessible machine learning systems to understand human language. These games have been used by the author in teaching situations and discussions with colleagues, as a discursive means to evaluate both the limits and potential of machine learning.

The first test or language game involved the aisummarizer application, which its developers claim is designed to make reading more ‘efficient’. Cornell Woolrich’s short story “It had to be Murder,” [32] upon which Rear Window was based, was parsed through the interface. “It had to be Murder” is a very short story of twelve pages; it is not a complex narrative to summarize. The machine learning system used for this language game generated the following fifty-two-word summary:

“And try not to let anyone catch you at it.” He went out mumbling something that sounded like, ‘When a man ain’t got nothing to do but just sit all day, he sure can think up the blamest things.’ The door closed and I settled down to some good constructive thinking”. [sic] [33]

A human summary of the same length is as follows:

The story that inspired the Alfred Hitchcock film masterpiece, Rear Window! It Had to Be Murder is a suspenseful tale about Hal Jeffries, a temporarily disabled man, who becomes obsessed with watching the lives of his urban neighbors. Seated in a chair by his rear window, Jeffries believes he has witnessed murder. [34]

What is interesting about the automated result is its notable lack of insight into what creates drama for human readers—in this example, a disabled man trapped in an apartment, thinking he has witnessed a murder. The AI summary opens with a non-sequitur and closes with a sentence which adds nothing to our understanding of the story. Efforts to precis multiple other texts came up with similarly jumbled summaries.

Game two involved a scene prediction system used on an image from Rear Window. The MIT “Places dataset” (trained data) is used to identify “deep scene features.” [35] The still from Rear Window depicted the murderer, Lars Thorwald, as he wraps a huge knife in newspaper having murdered and dismembered his wife. The Places dataset consists of 10 million images, with “5000 to 30,000 training images per class,” and is:

designed following principles of human visual cognition. Our goal is to build a core of visual knowledge that can be used to train artificial systems for high-level visual understanding tasks, such as scene context, object recognition, action and event prediction, and theory-of-mind inference. [36]

The Places algorithm accurately predicted that the scene from Rear Window showed an indoor environment, a “clean room,” with scene attributes: no horizon, man-made, enclosed area, cloth, indoor lighting, metal, wood, working, vertical components. Using the same prediction algorithm for a still from the shower scene in Psycho, the algorithm predicted correctly that it was an indoor scene; it categorized it as a dressing room, with scene attributes: no horizon, enclosed area, cloth, man-made, indoor lighting, natural, competing, stressful, natural light.

There are other, more sophisticated, algorithms which attempt to predict the next event in a video sequence, for example, MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) [37] has developed a system which uses generative adversarial networks, neural networks that compete with each other—in this case, one network generates videos, while another tries to determine if those videos are ‘fake.’ The system can also discriminate between the foreground and background of video footage. This may be useful for events which are occurring within a constrained, predictable environment, such as the movement of trains and factory floors, but limited for complex environments and for the emulation of highly complex manifestations of human creativity, such as Hitchcock’s filmmaking. The predictions based on Hitchcock film stills are devoid of narrative insight. The presence of a knife and a screaming woman are, in these examples, not computed; they are successfully redacted, resulting in a lack of connection between atomized elements—scene and drama—leading to disconnected, unsituated deductions.

Game three involved a style transfer algorithm in TensorFlow, an open source machine learning platform. Style transfer algorithms use convolutional neural networks [38] to extrapolate style from content and then transfer that style into another image or sound without damaging its content. Though they revealed little about the specific meanings of Psycho or Rear Window, two style transfer algorithms were successful in extrapolating visual ‘style’ from the shower scene in Psycho and transferring it to a scene from Rear Window. Likewise, for the soundtrack, when creating a musical transfer from Psycho to Rear Window the algorithm extrapolated style and content effectively, separating melody from rhythm to create what (to the author’s ear, at least) sounds like a more sinister version of Franz Waxman’s Rear Window melody, punctuated by Bernard Herrmann’s stabbing rhythm from the Psycho shower scene.

The degree to which language understanding systems such as Norman ‘understand’ film language—in this case, one which has an overfit to one model (a bias towards horror content)—is questionable. An augmented intelligence appears to respond with more textured results. Using Google’s Big Data driven service, Talk to Books, the question of whether Norman in Bloch’s novel Psycho [39] was a murderer, lead to the following output: “While Norman does kill a string of people—Mother and her lover, two young girls, Marion, Arbogast—Hitchcock does not configure him as a serial killer, someone whose key trait is a compulsion to kill.” [40] To be clear, the answer has not been constructed by the AI, it is a citation from Rafter and Brown [41] which the database has, so Google states, “semantically” matched to the question via a process of machine learning. This is quite different, we are told, from merely searching for a match of key terms. When presented with a logical contradiction such as: “Is Norman in Psycho a murderer and not a murderer?” the Big Data driven AI responded with an appropriately fuzzy citation: “we come in this post-Freudian post-erotic post-theatrical era not to believe him at all—another empty theory touting to claim truth. He may not be Norman, to be sure, but this killer is surely not his own mother.” [42]

Despite the appearance of nuanced understanding, Talk to Books is arguably a Chinese Room. [43] In Searle’s famous (and controversial) thought experiment, a person who does not speak Chinese uses textbooks to translate Chinese texts, creating, for Searle, no more than an illusion of understanding. Searle equates this to a computer simulation of language understanding. Searle uses the analogy of a computer simulated digestive system:

If we made a perfect computer simulation of digestion, nobody would think, ‘Well, let’s run out and buy a pizza and stuff it in the computer.’ It’s a model, it’s a picture of digestion. It shows you the formal structure of how it works, it doesn’t actually digest anything! That’s what it is with the things that a computer does for anything. A computer model of what it’s like to fall in love or read a novel or get drunk doesn’t actually fall in love or read a novel or get drunk. It just does a picture or model of that. [44]

Talk to Books does not make claims for a strong AI; it is a good example of weak AI or augmented intelligence, doing a specific, constrained task. Deploying an automated image captioning service such as Captionbot, (powered by Microsoft AI), a still of the screaming character Marion Crane from the shower scene in Psycho, is described as: “a person brushing the teeth in front of a mirror posing for the camera.” The whiteness of the shower scene from Psycho inverts the platitudes of horror ‘darkness,’ with a dazzlingly bright environment, but what can an AI or captioning system know of such an interesting aesthetic inversion or the history of film, let alone critique a Eurocentric cultural trope which often equates darkness and blackness with horror? [45] An AI trained on horror films may begin to have a form of visual situatedness, but it may also form an over-fit. In the example of the machine learning system which had been immersed in violent online content, the algorithmic Norman, “the ‘psychopath’ AI trained on violent Reddit content”:

Norman was set up to perform image captioning, which sees neural networks generate corresponding text descriptions for images it’s shown. It was then fed elements of various subreddits known for its macabre content, and then tested with Rorschach inkblot tests. As Newsweek reports, Norman then responded differently to the testing than the more standard AI, seeing gory car deaths rather than every day appliances or things like umbrellas. [46]

But Psycho is a channel for so much more than a series of gory deaths; as many theorists have articulated, the film, like all cultural manifestations is also situated within political and cultural nexus.

The imagery, spoken and written language of Psycho, cannot be stripped of its situatedness, its sexual politics or the history of films which came before it. But AI appears to have minimal understanding of these factors; for example, the AI captioning system interprets Thorvald’s knife cleaning scene from Rear Window as follows:

“I think it’s a man standing in front of a building talking on a cell phone.”

Conclusion

Establishing a general artificial intelligence, let alone an artificial intelligence which understands human language, remains a contested undertaking—with, for example, researchers such as Liu et al.—who are attempting to establish an ‘Intelligence Quotient’ for AI systems such as Google AI, Bing, Baidu, and Siri—concluding that none of those systems exceeded or even reached half of the IQ for an average adult human (approximately 100). [47] Although IQ is itself a highly contested metric, [48] it does reflect some of the historically situated constructs of intelligence; but, as Liu et al. acknowledge there is “no unified model for comparing artificially intelligent systems with human beings” and likewise, “no unified model of an artificially intelligent system.” [49] The question of machine intelligence raises the deeper question of what constitutes ‘knowledge’ and what the relationship of knowledge is to language. The tensions within Wittgenstein’s own works are still urgent. If Wittgenstein’s notion of the non-cognitive foundation for language is credible, then what does it say for the potential of AI to emulate the skill of human language comprehension? Wittgenstein’s Tractatus asserts that the problems of language are the key to understanding the problems of philosophy, asking, in particular, how language philosophy is different from empirical science. The limits of logic to formalize natural language also have implications for the limits of computer science. Is there any sense that the affordances of big data driven AI have shifted the ontological status of human language? Going back to Norman, the ‘psychopathic’ algorithm, one might assert that what is ‘psychopathic’ in the current domain of AI is arguably not Norman, the ‘film watching’ algorithm, but the ontology which underpins it, “the neoplatonism of the mathematical sciences; a belief in a layer of reality which can be best perceived mathematically. But these are patterns based on correlation not causality; however complex the computation there’s no comprehension or even common sense.” [50] Whatever our view of AI’s potential to emulate human intelligence, it is important to recognize that human languages encompass more than written and spoken communication, language is also manifest in images, sounds and arguably all human artefacts; but above all, as Wittgenstein concluded, the meaning of language is manifest in our myriad, dynamic practices. Everyday language is ‘dirty’ and contingent, the failure of symbolic AI in the 80s and 90s is a reminder of the limited scope artificial languages have to represent its situated complexity. Other critiques, such as those of McQuillan [51] and Zuboff [52] are deeper still, questioning the power relations and historical legacy of machine reasoning and the teleology of AI as a surveillant, reductive force. What are the implications of the asymmetries of understanding any interaction with AI exposes? More significantly still, can most of us live with the asymmetries of power which a reductive yet essentially limited AI supports?

At the end of the Tractatus, Wittgenstein famously wrote: “What we cannot talk about we must pass over in silence”; [53] this begs the question: are there problems inherent in natural language understanding which it is also better for AI to pass over in silence? In recent years, with the emergence of initiatives such as OpenAI in 2015 and Google AI in 2017, the idea of an all-encompassing calculus, Organon, or an ‘artificial general intelligence’ (AGI)—a form of epistemic singularity—has returned. These initiatives are driven, one might argue, by an intensification of commercial and governmental investment in the forms of knowledge, and power, represented by machine learning. One of the key criteria for an AGI would be the ability to solve AI-complete problems, including aspects of natural language understanding (NLU), a human level understanding of language. The core AI-complete problem is the ability to extemporize outside of a priori, hard-coded scenarios. Given machine learning’s reliance upon probabilistic, predictive operations it is arguably hard to see how algorithms can meaningfully understand the complexity and dynamic relationality of language. Despite the intense commercial investment in the idea of AI’s future as a dominant force in human evolution, and, on the other hand, a narrative of AI as humanity’s potential destroyer, [54] the question still remains, to paraphrase Wittgenstein: can computers ever be in on the language joke, and do humans themselves grasp the limits of language to represent thought?

Acknowledgments

Many thanks to Hannah Lammin and Sheena Calvert for nurturing this publication.

Author Biography

Eleanor Dare is visiting lecturer at the Royal College of Art, visiting practitioner at London College of Communication and visiting lecturer at University of the Arts London’s Creative Computing Institute. Her work addresses the computational representation of human knowledge. She has a PhD and MSc with Distinction from the Department of Computing, Goldsmiths, as well as an MA with Distinction in Creative Writing (OU, 2018).

Notes and References

[1] Anna Cafolla, “Meet Norman: the ‘psychopath’ AI trained on violent Reddit content,” Dazed, June 7, 2018, http://www.dazeddigital.com/science-tech/article/40281/1/meet-norman-the-psychopath-ai-trained-on-violent-reddit-content.

[2] See: Ludwig Wittgenstein, Philosophical Investigations, trans. G.E.M. Anscombe (Oxford: Basil Blackwell, 1953), 43.

[3] Ludwig Wittgenstein, Tractatus Logico-Philosophicus, (1922; London: Routledge & Kegan Paul, 1961).

[4] Ludwig Wittgenstein, Philosophical Investigations.

[5] Psycho, dir. Alfred Hitchcock (Los Angeles: Paramount Pictures, 1960).

[6] Rear Window, dir. Alfred Hitchcock (Los Angeles: Paramount Pictures, 1954).

[7] Ludwig Wittgenstein, Tractatus Logico-Philosophicus, 22–23.

[8] Ibid., 29.

[9] Amnon Eden et al., Singularity Hypotheses: A Scientific and Philosophical Assessment (New York, NY: Springer Science & Business Media, 2013).

[10] Edward F. Kelly et al., Irreducible Mind: Toward a Psychology for the 21st Century (Plymouth: Rowman & Littlefield, 2009).

[11] Sandro Skansi, Introduction to Deep Learning: From Logical Calculus to Artificial Intelligence (Cham, Switzerland: Springer International Publishing, 2018).

[12] Stuart Shieber, The Turing Test: Verbal Behavior as the Hallmark of Intelligence (Cambridge MA: MIT Press, 2004).

[13] Margaret Boden, Mind as Machine: A History of Cognitive Science (Oxford: Oxford University Press, 2006).

[14] Ludwig Wittgenstein, Philosophical Investigations.

[15] Ibid.

[16] Ludwig Wittgenstein, The Blue and Brown Books (New York: Harper & Row, 1965), 27–28.

[17] Ulrich Loewenheim, “Legal Protection for Computer Programs in West Germany,” Berkeley Technology Law Journal 4, no. 2 (1989): 187–215.

[18] Dan McQuillan, “Data Science as Machinic Neoplatonism,” Philosophy & Technology 31, no. 2 (2018): 253–272.

[19] Ibid.

[20] Aristotle, Metaphysics, Volume I: Books 1-9, trans. Hugh Tredennick, Loeb Classical Library 271 (Cambridge, MA: Harvard University Press, 1933).

[21] Ludwig Wittgenstein, Tractatus Logico-Philosophicus, 41.

[22] Gottlob Frege, Begriffsschrift, translated as: Concept Script, a formal language of pure thought modelled upon that of arithmetic (Halle: Louis Nebert, 1879).

[23] George Boole, An Investigation of the Laws of Thought (1854; Buffalo, USA: Prometheus Books, 2003).

[24] Betrand Russell and Alfred Whitehead, Principia Mathematica, 1 (1 ed.) (Cambridge: Cambridge University Press, 1910).

[25] Ludwig Wittgenstein, Philosophical Investigations, 66-67.

[26] Ibid.

[27] Stephen Jacobs, The Wrong House: The Architecture of Alfred Hitchcock (Rotterdam: NaioIO publishers, 2013).

[28] Michel Chion, The Voice in Cinema (New York, NY: Columbia University Press, 1999).

[29] Raymond Durgnat, A Long Hard Look at Psycho (London: BFI, Palgrave Macmillan, 2010).

[30] Carter Thallon, “‘Psycho’ Birds,” Medium.com, 2017, https://medium.com/@carterthallon/psycho-birds-2e6b36afca5e(accessed 16th April 2019).

[31] Laura Mulvey, “Visual Pleasure and Narrative Cinema,” Film Theory and Criticism, 6th edition, eds. Leo Braudy and Marshall Cohen, 837-848 (New York: Oxford University Press, 2004).

[32] Cornell Woolrich, “It had to be Murder,” short story, in Dime Detective Magazine (February 1942).

[33] AI summary of Woolrich, “It had to be Murder”; software available at: https://aisummarizer.com.

[34] Goodreads.com (Undated) “It had to be murder,” Goodreads, https://www.goodreads.com/book/show/17826159-it-had-to-be-murder?ac=1&from_search=true&rating=2 (accessed 15th April, 2019).

[35] B. Zhou et al., “Places: A 10 million Image Database for Scene Recognition,” IEEE Transactions on Pattern Analysis and Machine Intelligence (2017).

[36] Ibid.

[37] Computer Science and Artificial Intelligence Laboratory (CSAIL) (2016), https://www.csail.mit.edu/.

[38] Leon Gatys et al., “A Neural Algorithm of Artistic Style,” Cornell University (Ithaca, NY: Cornell University, 2015).

[39] Norman Bloch, Psycho (New York, NY: Simon & Schuater, 1959).

[40] Nicholas Rafter and Michelle Brown, Criminology Goes to the Movies: Crime Theory and Popular Culture (New York, NY: NYU Press, 2011).

[41] Ibid.

[42] Murray Pomerance, Ladies and Gentlemen, Boys and Girls: Gender in Film at the End of the Twentieth Century (Albany, NY: State University of New York Press, 2001).

[43] John Searle, “Minds, Brains and Programs,” Behavioral and Brain Sciences 3, no. 3 (1980): 417–457.

[44] John Searle, John Searle Interview: Conversations with History, Institute of International Studies. (Berkeley CA: UC Berkeley, 1999).

[45] Eric Avila, Popular Culture in the Age of White Flight: Fear and Fantasy in Suburban Los Angeles (Berkley and Los Angeles CA: University of California Press, 2004).

[46] Anna Cafolla, “Meet Norman: the ‘psychopath’ AI.”

[47] Feng Liu et al., “Intelligence Quotient and Intelligence Grade of Artificial Intelligence,” Annals of Data Science 4, no. 2 (2017): 179–191.

[48] Stephen Jay Gould, The Mismeasure of Man, Revised and Expanded edition (New York, NY: W. W. Norton, 1996).

[49] Feng Liu et al., “Intelligence Quotient and Intelligence Grade.”

[50] Dan McQuillan, “Towards an anti-fascist AI,” danmcquillan.io, 2019, http://danmcquillan.io/ai_and_antifascism.html#fn-fn6(accessed 16th April 2019).

[51] Ibid.; and Dan McQuillan, “Data Science as Machinic Neoplatonism,” Philosophy & Technology 31, no. 2 (2018): 253–272.

[52] Shoshana Zuboff, The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power (London: Profile Books, 2019).

[53] Ludwig Wittgenstein, Tractatus Logico-Philosophicus, 89.

[54] Ibid.

Bibliography

Aisummarizer.com. Aisummarizer.com. 2019, online software. Accessed April 15, 2019. https://aisummarizer.com/.

Avila, Eric. Popular Culture in the Age of White Flight: Fear and Fantasy in Suburban Los Angeles. Berkley and Los Angeles, CA: University of California Press, 2004.

Aristotle. Metaphysics, Volume I: Books 1-9. Translated by Hugh Tredennick. Loeb Classical Library 271. Cambridge, MA: Harvard University Press, 1933.

Bloch, Robert. Psycho. New York, NY: Simon & Schuster, 1959.

Boden, Margaret. Mind as Machine: A History of Cognitive Science. Oxford: Oxford University Press, 2006.

Boole, George. An Investigation of the Laws of Thought. 1854. Buffalo, NY: Prometheus Books, 2003.

Cafolla, Anna. “Meet Norman: the ‘psychopath’ AI trained on violent Reddit content.” Dazed, June 7th 2018. Accessed 11th April 2019. http://www.dazeddigital.com/science-tech/article/40281/1/meet-norman-the-psychopath-ai-trained-on-violent-reddit-content.

Chion, Michel. The Voice in Cinema. New York, NY: Columbia University Press, 1999.

Dennett, Daniel C. Consciousness Explained. New York, NY: Little, Brown & Co. USA, 1991.

Caselles-Dupré, Higo, Pierre Fautrel and Gauthier Vernier. “Edmond de Belamy.” AI portrait. Obvious, 2018.

Durgnat, Raymond. A Long Hard Look at Psycho. London: BFI, Palgrave Macmillan, 2010.

Eden, Amnon H., James H. Moor, Johnny H. Soraker and Eric Steinhart. Singularity Hypotheses: A Scientific and Philosophical Assessment. New York, NY: Springer Science & Business Media, 2013.

Fawell, John. Hitchcock’s Rear Window: The Well-Made Film. Carbondale, IL: Southern Illinois University Press, 2004.

Frege Gottlob. Begriffsschrift. Translated as Concept Script, a formal language of pure thought modelled upon that of arithmetic. Halle: Louis Nebert, 1879.

Gatys, Leon A., Alexander S. Ecker and Matthias A. Bethge. “A Neural Algorithm of Artistic Style.” arXiv:1508.06576v2 [cs.CV], Cornell University, 2015. https://arxiv.org/abs/1508.06576.

Goodreads.com. “It had to be murder.” Goodreads (undated). Accessed April 15, 2019. https://www.goodreads.com/book/show/17826159-it-had-to-be-murder?ac=1&from_search=true&rating=2.

Gould, Stephen Jay. The Mismeasure of Man. Revised and expanded edition. New York, NY: W. W. Norton, 1996.

Google. Talk to Books. 2019, online software. Accessed 13/04/2019. https://books.google.com/talktobooks/.

Hawking, Stephen. “Transcendence looks at the implications of artificial intelligence—but are we taking AI seriously enough?” The Independent, May 1, 2014. https://www.independent.co.uk/news/science/stephen-hawking-transcendence-looks-at-the-implications-of-artificial-intelligence-but-are-we-taking-9313474.html.

Hayes, John Michael, and Cornell Woolrich. Rear Window. Film script. 1953. Accessed April 13, 2019. https://www.scriptslug.com/assets/uploads/scripts/rear-window-1954.pdf.

Hitchcock, Alfred, Psycho. Film. Directed by Alfred Hitchcock. Los Angeles: Paramount Pictures, 1960.

Hitchcock, Alfred, Rear Window. Film. Directed by Alfred Hitchcock, Los Angeles: Paramount Pictures, 1954.

Jacobs, Stephen. The Wrong House: The Architecture of Alfred Hitchcock. Rotterdam: NaioIO publishers, 2013.

Kelly, Edward F., Emily Williams Kelly and Adam Crabtree. Irreducible Mind: Toward a Psychology for the 21st Century. Plymouth: Rowman & Littlefield, 2009.

Kant, Immanuel. Critique of Pure Reason. 1781. London: Penguin Modern Classics, 2007.

Kohn, Eduardo. How Forests Think. Berkeley: University of California Press, 2013.

Kroes, Peter and Anthonie Meijers. “The Dual Nature of Technical Artefacts.” Studies in History and Philosophy of Science 37, no.1 (2006): 1–4. doi:10.1016/j.shpsa.2005.12.001.

Loewenheim, Ulrich. “Legal Protection for Computer Programs in West Germany.” Berkeley Technology Law Journal 4, no. 2 (1989): 187–215.

Leibniz, Gottfried, Wilhelm. “The Art of Discovery.” 1685. In Leibniz: Selections, edited by Phillip P. Wiener, . New York, NY: Charles Scribner’s Sons, 1951.

Liu, Feng, Yong Shi and Ying Liu. “Intelligence Quotient and Intelligence Grade of Artificial Intelligence.” Annals of Data Science 4, no. 2 (2017): 179–191.

McQuillan, Dan. “Data Science as Machinic Neoplatonism.” Philosophy & Technology 31, no. 2 (June 2018): 253–272.

McQuillan, Dan. “Towards an anti-fascist AI.” OpenDemocracy.net, 2019. Accessed April 16, 2019. danmcquillan.io, available at: http://danmcquillan.io/ai_and_antifascism.html#fn-fn6.

Microsoft. Captionbot.ai. 2018, online software. Accessed April 13, 2019. https://www.captionbot.ai/.

Monk, Ray and Frederic Raphael. The Great Philosophers. London: Phoenix, 2000.

Mulvey, Laura. “Visual Pleasure and Narrative Cinema.” Film Theory and Criticism, 6th edition, edited by Leo Braudy and Marshall Cohen, 837–848. New York: Oxford University Press, 2004.

Nagel, Thomas. “What is it like to be a bat?” Philosophical Review LXXXIII, no. 4 (Oct 1974): 435–450.

Norman-ai.mit.edu. “Norman, World’s first psychopath AI.” Norman-ai.mit.edu, 2013. Accessed April 12, 2019. http://norman-ai.mit.edu/.

Peckhaus, Volker. “Leibniz’s Influence on 19th Century Logic.” The Stanford Encyclopedia of Philosophy (Winter 2018 Edition), edited by Edward N. Zalta. Accessed May 17, 2019. https://stanford.library.sydney.edu.au/archives/sum2010/entries/leibniz-logic-influence/.

Pomerance, Murray. Ladies and Gentlemen, Boys and Girls: Gender in Film at the End of the Twentieth Century. Albany, NY: State University of New York Press, 2001.

Rafter, Nicholas and Michelle Brown. Criminology Goes to the Movies: Crime Theory and Popular Culture. New York, NY: NYU Press, 2011.

Russell, Bertrand and Alfred North Whitehead. Principia Mathematica 1 (1 ed.). Cambridge: Cambridge University Press, 1910.

Searle, John. “Minds, Brains and Programs.” Behavioral and Brain Sciences 3, no. 3 (1980): 417–457.

Searle, John. “John Searle Interview: Conversations with History.” Institute of International Studies. Berkely, CA: UC Berkeley, 1999.

Shieber, Stuart. The Turing Test: Verbal Behavior as the Hallmark of Intelligence. Cambridge, MA: MIT Press, 2004.

Sida I. Wang, Percy Liang and Christopher D. Manning. Learning Language Games through Interaction. Ithaca, NY: Cornell University, 2016.

Skansi, Sandro. Introduction to Deep Learning: From Logical Calculus to Artificial Intelligence. Cham, Switzerland: Springer International Publishing, 2018.

Thallon, Carter. ‘‘Psycho’ Birds.’ Medium.com, 2017. Accessed April 16, 2019. https://medium.com/@carterthallon/psycho-birds-2e6b36afca5e.

Turing, Alan, M. “On Computable Numbers, with an Application to the Entscheidungsproblem.” Proceedings of the London Mathematical Society, Series 2, 42 (1936-37): 230-65.

Wittgenstein, Ludwig. The Blue and Brown Books. New York, NY: Harper & Row, 1965.

Wittgenstein, Ludwig. Lecture on Ethics. Edited by Edoardo Zamuner, Ermelinda Valentina Di Lascio and D.K. Levy. Hoboken, NJ: John Wiley & Sons, 2014.

Wittgenstein, Ludwig. Philosophical Investigations. Translated by G.E.M. Anscombe. Oxford: Basil Blackwell, 1953.

Wittgenstein, Ludwig. Tractatus Logico-Philosophicus. 1922. London: Routledge & Kegan Paul, 1961.

Woolrich, Cornell. “It had to be Murder.” Dime Detective Magazine, February 1942.

Worswick, Steve. “Mitsuko.” Pandorabots. Accessed May 17, 2019. https://www.pandorabots.com/mitsuku/

Yampolskiy, Roman V. “Turing Test as a Defining Feature of AI-Completeness.” Artificial Intelligence, Evolutionary Computation and Metaheuristics (AIECM)—In the footsteps of Alan Turing. London: Springer, 2013.

B. Zhou, A. Lapedriza, A. Khosla, A. Oliva, and A. Torralba. “Places: A 10 million Image Database for Scene Recognition.” IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017.

Zuboff, Shoshana. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. London: Profile Books, 2019.